Historias de Aprendizaje: Experimentos de AdWords

La excepción que confirma la regla. ¿A mejor posición en AdWords mejores resultados? ¿Si pujas más convertirás mejor? En algunos casos, el hecho de mejorar la posición para una determinada palabra clave puede salirte caro. Descubre cómo hacer la prueba sin poner en riesgo el rendimiento de tu cuenta.

Caso práctico de experimentos de Adwords de pujas ¡empezamos!

Aunque la lógica nos dice que en mejores posiciones una keyword podrá tener mejores resultados, sabemos que, en algunos casos, peores posiciones pueden tener un rendimiento mejor en cuanto a ROI.

Esto suele ocurrir, por ejemplo, con términos demasiado genéricos: el usuario buscará en la página de resultados un anuncio que encaje con lo que realmente necesita, aunque haya colocado en la caja de búsquedas un término amplio.

También sucede con búsquedas difíciles, por ejemplo en el caso de productos/servicios demasiado innovadores que la gente no conoce aún. No existe un set de keywords que realmente pueda definirlos, pero sí se puede desplazar demanda de otras búsquedas.

Problemática

Cuando vemos que una keyword tiene buen rendimiento y queremos explotar su potencial, habitualmente revisamos las impresiones que acumula y si hay algún campo de crecimiento (pérdidas por ranking o por presupuesto). Si detectamos pérdidas por ranking, tenderemos a incrementar la puja para tener más posibilidades de conseguir resultados.

En el caso del cliente que hoy nos ocupa se trabaja con un alto volumen de keywords genéricas, y necesitamos saber con precisión si el ranking extra que podemos ganar al subir las pujas nos permitirá mejorar los resultados. Es necesario saberlo con antelación, ya que, una vez ejecutados los cambios, pueden ser difíciles de revertir (las keywords genéricas son muy sensibles a los cambios en las pujas).

Para este cliente en concreto, si aplicamos cambios masivos sin prever las consecuencias, podemos dañar gravemente el performance de la cuenta, ya que de las keywords genéricas obtenemos en torno al 80% de los resultados.

Solución

El uso de experimentos de AdWords para distinguir resultados entre las mismas keywords con pujas diferentes es muy útil en estos casos, y nos da la fiabilidad estadística que requerimos para no equivocarnos con las acciones implementadas.

Hay que tener en cuenta que los experimentos pueden afectar al nivel de calidad de nuestras keywords.

Cómo se implementa

En la pestaña de configuración de la campaña existe un bloque de “Experimentos”. La implementación es muy sencilla.

1. Creación del experimento

Se crea el experimento y se decide el porcentaje de impresiones que se destinarán a él, esto es, para cuántas impresiones quieres pujar con tu puja habitual y para cuántas con la nueva puja. Para tomar esta decisión, debes tener en cuenta cuánto estás dispuesto a arriesgar.

Cuanto mayor sea el porcentaje de impresiones destinado al experimento, antes tendrás datos de cómo se comportan estas pujas. Pero también es posible que durante el período que dura el experimento los resultados se vean más afectados.

En este caso decidimos que el porcentaje de impresiones que se destinarían al experimento fuese del 50%. La decisión se basó en la rapidez con la que necesitábamos prever los resultados:

➡ Si queremos tener datos pronto, deberemos usar un porcentaje alto de split (50%). Si por el contrario, no queremos arriesgar mucho cada día y queremos experimentar de forma paulatina, utilizaremos porcentajes más bajos.

2. Duración del Experimento

Escogemos ahora el tiempo de duración del experimento. Lo podemos poner a 30 días o fijar la fecha de finalización de forma manual. Como desconocemos cuándo podremos tener datos relevantes para sacar conclusiones, lo mejor es poner una fecha de finalización a largo plazo. Si tenemos datos antes de que llegue esa fecha, podemos detener el experimento cuando queramos.

3. Modificación de pujas

Seguidamente, se modifican las keywords cuya puja queramos testear. Hay que colocarse en la pestaña de palabras clave y desplegar el segmento Experimento para poder ver la opción de establecer una puja diferente.

Configura diferentes pujas para una keyword desplegando el Segmento Experimento

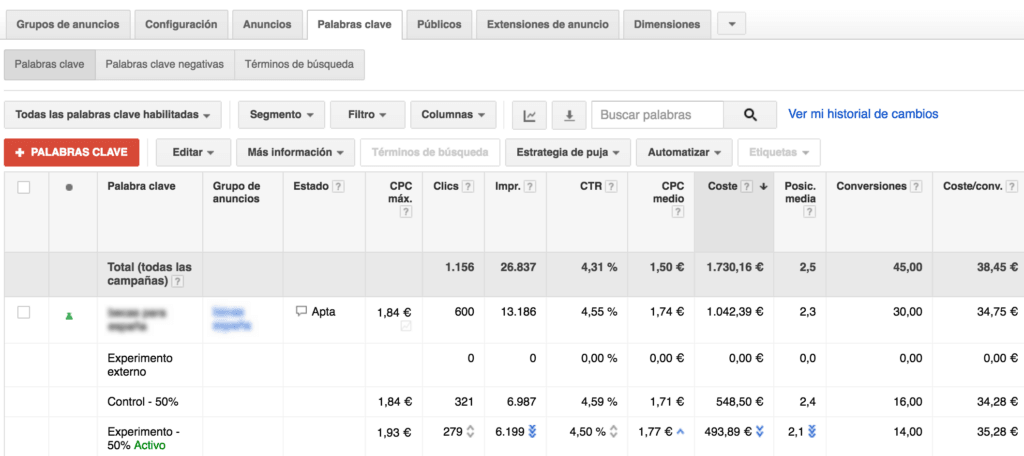

En nuestro caso aplicamos una subida de pujas de cerca del 20% para este listado de keywords más genéricas, y fuimos monitorizando los resultados cada 3-4 días para controlar el status del experimento.

Los resultados se marcan con flechas azules cuando la relevancia estadística es suficiente.

Fin del Experimento ¿y ahora qué?

Una vez terminado el experimento podemos aplicar los cambios o no. ¿Qué pasa si sólo queremos aplicarlos para algunas de las keywords? Tendremos que hacer el proceso manualmente, descartar los cambios del experimento y establecer las pujas que consideremos en cada palabra clave ¡una faena!

Resultados

Para este cliente y estas palabras clave concretas, la subida experimental de pujas sirvió únicamente para incrementar los CPCs y CTRs, pero no dio lugar a más conversiones. Simplemente, cada conversión nos resultaba más cara. El CPL era más alto en las keywords con pujas más elevadas.

Como habréis deducido ya, finalizamos el experimento sin aplicar los cambios. Y de esta forma comprobamos que no siempre pujas más altas dan lugar a mejores resultados. ¿Y tú, cómo pones a prueba tus ideas?